ISE Cryptography — Lecture 05

Public Key Cryptography

Welcome back

- Last week we closed the symmetric story: confidentiality, integrity, authenticated encryption

- Today we cross the bridge that every symmetric primitive dodged

- Alice and Bob can now build unforgeable, unreadable messages under a shared key

- The remaining question is the same one we’ve been ignoring since Lecture 01: where does that shared key come from?

- This is where the EPIC project finds its identity layer

- Key establishment (today), signatures and CCA security (L06), AEAD composition (L07), full end-to-end (L08 HPKE)

- Every primitive from here plugs into the capstone

The Key Distribution Problem

About that shared key we’ve been assuming…

Why Symmetric Alone is Not Enough

- Four lectures built powerful symmetric primitives

- Stream ciphers, block ciphers, MACs, hash functions, authenticated encryption

- Every single one assumes Alice and Bob already share a secret key

- How do they get that key in the first place?

- Can’t send it in plaintext: an eavesdropper reads it

- Can’t encrypt it: that needs a key too, and we’re back where we started

- Ever meet up with Jeff Bezos to agree a secret key for amazon.com?

- Kerckhoffs’s principle: the key is the only secret

- Key establishment is the existential problem of symmetric cryptography

- Every secure messaging app, every TLS handshake, every VPN has to solve it

- Alice and Bob need a protocol that exchanges a key over a public channel

- Today’s version is anonymous: no identity binding yet

- Authentication comes in L06 via digital signatures and PKI

Key Exchange Protocol

- A key exchange protocol is a conversation between Alice and Bob over a public channel

- They take turns sending messages that anyone can read

- At the end, both arrive at the same secret value \(k\)

- Neither party picks \(k\) up front

- They each contribute randomness. The shared value falls out of the conversation.

- Even Alice and Bob don’t know what \(k\) will be until the protocol finishes

- The transcript \(T_P\) is everything the eavesdropper sees

- i.e. the sequence of messages exchanged, visible to anyone listening

- Alice and Bob’s private random choices stay hidden

- The security question: given the transcript, can an adversary recover \(k\)?

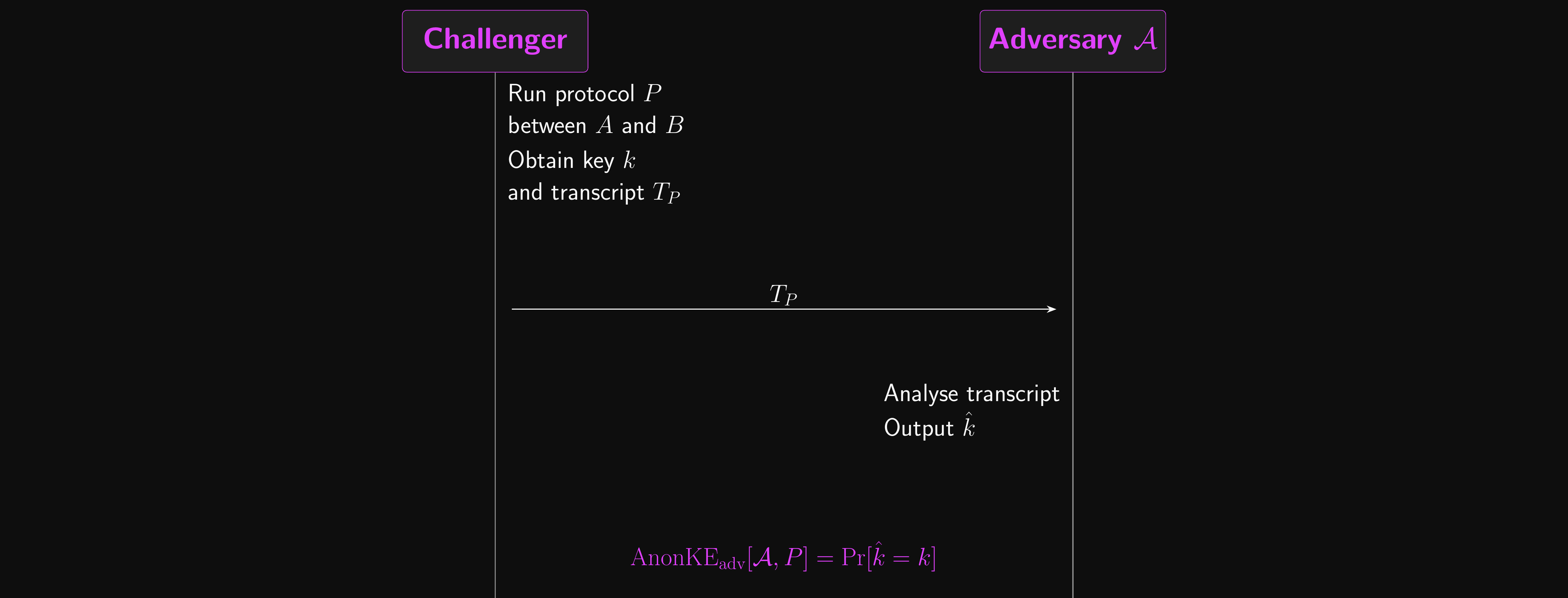

Key Exchange Protocol: Attack Game

- We formalise that question as an attack game before we try to build anything

- The challenger runs the protocol between Alice and Bob, producing transcript \(T_P\) and key \(k\)

- The challenger sends \(T_P\) to the eavesdropping adversary \(\mathcal{A}\)

- \(\mathcal{A}\) outputs a guess \(\hat{k}\) (the hat is convention for an estimator)

- \(\mathcal{A}\)’s advantage: \(\text{AnonKE}_\text{adv}[\mathcal{A}, P] = \Pr[\hat{k} = k]\)

- The protocol is secure against eavesdroppers if this advantage is negligible for every efficient \(\mathcal{A}\)

- All abstract for now. In a few slides, Diffie-Hellman arrives as our first concrete \(P\).

Key Exchange: Attack Game

Key Exchange Protocol: Security

- This definition has more holes than Emmental, and it’s worth calling them out before we build on it

- Passive adversary only. We assume the eavesdropper doesn’t tamper with messages

- This is unrealistic! Real attackers don’t just listen, they rewrite traffic

- We’ll patch this with signatures and PKI in L06

- All-or-nothing key guess. We only measure whether the adversary recovers the entire \(k\)

- They could guess 90% of the bits every time and the definition still says “negligible”

- Anonymous. Nothing in the protocol identifies Alice or Bob

- If Mallory pretends to be Bob, Alice has no way to tell

- Weak security aside, can we do this with just the symmetric tools we already have?

- Actually, yes: Merkle puzzles (1974) gave the first public-channel key exchange, using only hash functions

- But the security gap between honest parties and the attacker is only quadratic, too weak in practice

- Public-key primitives (DH, RSA) give us super-polynomial gaps, which is what the rest of this lecture is about

Diffie-Hellman Key Exchange (DHKE)

No mayonnaise jokes, please.

The Shape of a Key Agreement

- A concrete key exchange protocol needs two functions: a share function \(E\) and a combine function \(F\)

- Alice chooses a random secret \(\alpha\), computes her share \(E(\alpha)\), and sends it to Bob

- Bob chooses a random secret \(\beta\), computes his share \(E(\beta)\), and sends it to Alice

- With the information each now has, both independently compute \(F(\alpha, \beta)\)

- \(F(\alpha, \beta)\) is the shared key

- The share function \(E\) and combine function \(F\) must satisfy these properties

- \(E\) should be easy to compute

- \(F(\alpha, \beta)\) should be easy to compute given \(\alpha\) and \(E(\beta)\)

- \(F(\alpha, \beta)\) should be easy to compute given \(E(\alpha)\) and \(\beta\)

- \(F(\alpha, \beta)\) should be hard to compute given \(E(\alpha)\) and \(E(\beta)\)

- This implies that \(E\) is hard to invert. Why?

- Efficient for Alice and Bob, hard for an adversary. Let’s find concrete \(E\) and \(F\).

Logarithms: the One-Way Function We Need

- Recall the shape we need: a share function \(E\) that’s easy to compute but hard to invert, plus a combine function \(F\) we can compute two ways

- Exponentiation is easy! Computing \(g^\alpha\) takes roughly \(\log_2 \alpha\) multiplications via square-and-multiply

- Taking logarithms is hard… or at least the right kind of logarithm is

- Ordinary real-valued logs are easy

- We’ll meet the hard version, the discrete logarithm, later in this section once the group structure is in place

- With that in mind, let’s try

- Share: \(E(\alpha) = g^\alpha\)

- Combine: \(F(\alpha, \beta) = g^{\alpha\beta}\)

- The protocol works both ways

- \(E(\alpha)^\beta = (g^\alpha)^\beta = g^{\alpha\beta} = (g^\beta)^\alpha = E(\beta)^\alpha\)

- One wrinkle: ordinary exponentiation produces gigantic numbers very quickly!

- We need some way to keep them bounded, and that’s exactly where the “hard” kind of logarithm comes in

Working Modulo a Prime

- The fix for exploding numbers: work modulo a large prime \(p\)

- All values stay in \(\{0, 1, \ldots, p-1\}\), so numbers never blow up

- Our playground has a name: the multiplicative group of integers mod \(p\), written \(\mathbb{Z}_p^*\)

- integers mod \(p\): values drawn from \(\{0, 1, \ldots, p-1\}\)

- multiplicative: we multiply and reduce mod \(p\); addition is a separate story

- group: closed under the operation, associative, has an identity (the number \(1\)), and every element has an inverse

- Why is 0 not in \(\mathbb{Z}_p^*\)? It has no multiplicative inverse (\(0 \cdot x = 0\) for every \(x\))

- 0 does show up in the additive group \(\mathbb{Z}_p = \{0, 1, \ldots, p-1\}\) under \(+\), where it’s the identity

- Why insist that \(p\) is prime?

- Only for prime \(p\) does every element in \(\{1, \ldots, p-1\}\) have a multiplicative inverse mod \(p\)

- With a composite modulus, some elements share factors with \(p\) and fail to be invertible, breaking the group structure

The Group Setting

- We work in a finite group \(\mathbb{G}\) where the discrete log problem is hard

- Let \(p\) be a large prime with another large prime \(q\) dividing \(p - 1\)

- Then \(\mathbb{Z}_p^*\) has an element \(g\) of order \(q\)

- The set \(\mathbb{G} = \{g^a \bmod p : a \in \mathbb{Z}_q\}\) has exactly \(q\) elements

- \(\mathbb{G}\) is a subgroup of \(\mathbb{Z}_p^*\): closed under multiplication and inversion

- All exponents are taken from \(\mathbb{Z}_q\)

- For \(u \in \mathbb{G}\) and integers \(a, b\), we have \(u^a = u^b\) whenever \(a \equiv b \pmod q\)

- Public system parameters: \(\mathbb{G}\) (including \(g\) and \(q\)), fixed at setup, shared by all parties

- Typical sizes: \(p\) is 2048+ bits, \(q\) is 256+ bits

- We’ll come back to why \(q\) is prime and what shape \(p\) takes; for now this is where DH lives

Diffie-Hellman Key Exchange

- The protocol, running in \(\mathbb{G}\):

- Alice picks a random secret \(\alpha \xleftarrow{R} \mathbb{Z}_q\) and computes \(u \leftarrow g^\alpha\)

- Alice sends \(u\) to Bob

- Bob picks a random secret \(\beta \xleftarrow{R} \mathbb{Z}_q\) and computes \(v \leftarrow g^\beta\)

- Bob sends \(v\) to Alice

- Alice computes \(w \leftarrow v^\alpha = g^{\beta\alpha}\)

- Bob computes \(w \leftarrow u^\beta = g^{\alpha\beta}\)

- The shared secret is \(w = g^{\alpha\beta}\); both sides arrive at the same value

- An eavesdropper sees only \(u = g^\alpha\) and \(v = g^\beta\), both discrete log problems

Diffie-Hellman Assumption

- The security of DHKE rests on a formal hardness assumption about the group \(\mathbb{G}\)

- For this section, finite-field Diffie-Hellman, operating in the order-\(q\) subgroup of \(\mathbb{Z}_p^*\)

- Put into words

- Given \(g^\alpha, g^\beta \in \mathbb{G}\) with \(\alpha, \beta\) chosen at random

- It should be computationally hard to produce \(g^{\alpha\beta}\)

- So an eavesdropper with only the transcript \((g^\alpha, g^\beta)\) can’t compute the shared secret

- This is the computational Diffie-Hellman assumption (CDH)

- CDH builds on the discrete logarithm assumption (DL): given \(g^\alpha\), you can’t recover \(\alpha\)

- CDH is a stronger assumption (more is being claimed), but seems to hold wherever DL does

- There is no formal proof of either DL or CDH being hard! Both are conjectures, believed to hold based on decades of failed attacks

- Cryptography lives with these unproven assumptions; the best we can do is reduce higher-level schemes to them

Orbits and Cyclic Groups

- Now: why specifically the order-\(q\) subgroup with \(q\) prime? Bad parameter choices can break DH even with the assumption.

- With the group in place, think of “multiply by \(g\)” as a rule that moves between elements

- Pick any \(x\) you like, then follow the rule: \(x \to gx \to g^2 x \to \ldots\)

- Sooner or later you come back to \(x\), tracing out a cycle called the orbit of \(x\)

- Example: \(p = 11\), \(g = 2\). Start from 1 and multiply by 2 each step

- \(1 \to 2 \to 4 \to 8 \to 5 \to 10 \to 9 \to 7 \to 3 \to 6 \to 1\)

- All 10 elements visited in one cycle! \(g = 2\) is a generator

- Starting from any other element gives the same cycle, just entered at a different point

- When one orbit covers the whole group, we call it a cyclic group

A Generator Visits Every Element

Generators and Order

- Not every \(g\) is a generator! Some orbits miss elements.

- Same \(p = 11\), but try \(g = 3\). Start from 1 and multiply by 3

- \(1 \to 3 \to 9 \to 5 \to 4 \to 1\): only 5 elements before we’re back to the start!

- \(g = 3\) has order 5: the orbit closes after 5 steps

- The orbit of 1 is a subgroup \(\{1, 3, 4, 5, 9\}\), just half of \(\mathbb{Z}_{11}^*\)

- So where did the other 5 elements go? Start from any one of them, say \(2\)

- \(2 \to 6 \to 7 \to 10 \to 8 \to 2 \pmod{11}\): another 5-cycle, disjoint from the first

- Same rule, different starting point, different orbit

- Multiplication by 3 partitions \(\mathbb{Z}_{11}^*\) into two orbits of size 5

A Non-Generator Splits the Group

Subgroups and the Pohlig-Hellman Attack

- Why does the orbit close early for some \(g\)?

- \(|\mathbb{Z}_{11}^*| = 10 = 2 \times 5\)

- The group has subgroups of every order that divides 10: orders 1, 2, 5, and 10

- \(g = 10\) has order 2 (check: \(10^2 = 100 = 1 \bmod 11\)); even worse!

- If an adversary can confine the problem to a small subgroup, discrete log becomes easy

- An order-5 subgroup? Only 5 values to try. An order-2 subgroup? Trivial

- This is the Pohlig-Hellman attack: decompose the problem into small-subgroup pieces, solve each cheaply, then recombine via CRT

Small-Subgroup Confinement

- Why does a weak generator actually cost us? Let’s walk through what an eavesdropper gets to do.

- Suppose Alice and Bob use \(p = 11\) with \(g = 3\)

- We just saw \(g = 3\) has order 5 and reaches only the subgroup \(\{1, 3, 4, 5, 9\}\)

- Trace what the attacker sees on the wire

- Whatever random \(\alpha\) Alice picks, her share \(E(\alpha) = g^\alpha\) lands in \(\{1, 3, 4, 5, 9\}\). Only 5 possible values.

- Same for Bob’s share \(E(\beta)\), and the combined key \(g^{\alpha\beta}\)

- So the attacker just guesses: 5 candidates, win with probability \(1/5\)

- Compare to a strong group where guessing wins with probability \(\approx 1/p\), negligible for 2048-bit \(p\)

Generator Choice Matters

- The attack scales with subgroup size, not prime size

- Order-5 subgroup: 5 candidates. Order \(10^9\) subgroup: a billion, still trivial vs \(2^{2048}\)

- Generator choice matters as much as prime choice. A 2048-bit prime buys us nothing if \(g\) only reaches a tiny subgroup.

Safe Primes and Prime-Order Subgroups

- Pohlig-Hellman exploits small factors of \(p - 1\). We need a \(p\) that denies it any.

- \(p\) is an odd prime, so \(p - 1\) is even: the factor of 2 is unavoidable

- The simplest secure structure: \(p - 1 = 2q\) where \(q\) is itself prime

- A prime \(p = 2q + 1\) with \(q\) also prime is called a safe prime; \(q\) is a Sophie Germain prime

- The only subgroup orders of \(\mathbb{Z}_p^*\) are \(1, 2, q\), and \(2q\)

- This is exactly the structure \(\mathbb{G}\) needs

- \(\mathbb{G}\), the order-\(q\) subgroup, has prime order

- Every non-identity element generates all of \(\mathbb{G}\) (because \(q\) is prime)

- No small subgroups to exploit

Safe Primes in Practice

- Our example: \(p = 11 = 2 \times 5 + 1\) is a safe prime, \(q = 5\)

- \(\{1, 3, 4, 5, 9\}\) is the order-5 subgroup (generated by \(g = 3\))

- Every element except 1 generates the whole subgroup

Choosing \(p\), \(q\), \(g\) in Practice

- \(p\): a 2048+ bit safe prime, constructed from nothing-up-my-sleeve constants

- MODP groups use digits of \(\pi\); ffdhe groups use digits of \(e\). No room to hide a backdoor.

- \(q = (p - 1)/2\) for safe-prime groups, so \(q\) is ~2047 bits as well

- Schnorr-style groups instead fix a smaller \(q\) first (~256 bits), then find \(p = qr + 1\) for some integer \(r\)

- \(g\): usually \(g = 2\) for safe-prime groups

- The prime \(p\) is chosen so that 2 lands inside the order-\(q\) subgroup \(\mathbb{G}\) (so \(g = 2\) generates the whole subgroup)

- Small base means fast exponentiation; security rests on the secret exponent \(\alpha\), not on \(g\)

- These are public system parameters: published once at protocol design time, baked into every implementation, used by everyone

Standardised Groups

- So how do we pick our modulus and generator?

- Do NOT roll your own. Use standardised groups.

- Concrete choices you should recognise

Why DH Keys Are So Large

- Why are these numbers so large compared to symmetric keys?

- The best known discrete-log algorithm (number field sieve) runs in sub-exponential time in the prime size

- Doubling the prime length roughly doubles the security bits, but the constants are brutal

- 2048-bit MODP \(\approx\) 112-bit symmetric security; 3072-bit \(\approx\) 128-bit

- For 128-bit security pick 3072-bit MODP, or switch primitives entirely

- Elliptic curves close the gap with much smaller keys; we’ll see why in the next section

- Poor choices have dire consequences, as the next slide shows.

Logjam: What Happens When You Reuse Weak Parameters

- 2015: Logjam attack broke TLS, IPsec and SSH deployments using 512-bit DH

- A single 512-bit prime was shared across ~8% of the top million HTTPS sites

- The attack has two stages

- Precomputation on that one prime: about a week on academic hardware

- Per-session break: about 70 seconds, reusing that precomputation

- So an attacker amortises a week of compute and then breaks a new session every couple of minutes

- The NSA was believed to be running this against 1024-bit primes on nation-state hardware

- Snowden documents described exactly this capability

- Two lessons you should carry out of this lecture

- Parameter size matters: 1024-bit MODP is no longer safe; 2048-bit is the floor

- Parameter reuse matters: if millions of sessions share one prime, the one-prime precomputation cost pays off for every single one of them

Elliptic Curves and ECDH

What if discrete log was on a curve?

Elliptic Curve Cryptography

- An elliptic curve \(E\) over a finite field \(\mathbb{F}_p\) is the set of points \((x, y)\) satisfying:

- \(y^2 = x^3 + ax + b \bmod p\), for some prime \(p > 3\)

- Plus a special point \(\mathcal{O}\) called the point at infinity

- If \(E/\mathbb{F}_p\) is an elliptic curve…

- Then \(E(\mathbb{F}_p)\) is the set of points on that curve defined over \(\mathbb{F}_p\)

- \(E(\mathbb{F}_p)\) turns out to be a finite abelian group

- If \(E(\mathbb{F}_p)\) is also cyclic…

- …and the discrete log problem is hard in the group \(E(\mathbb{F}_p)\)…

- …we could swap it out and use it in Diffie-Hellman instead!

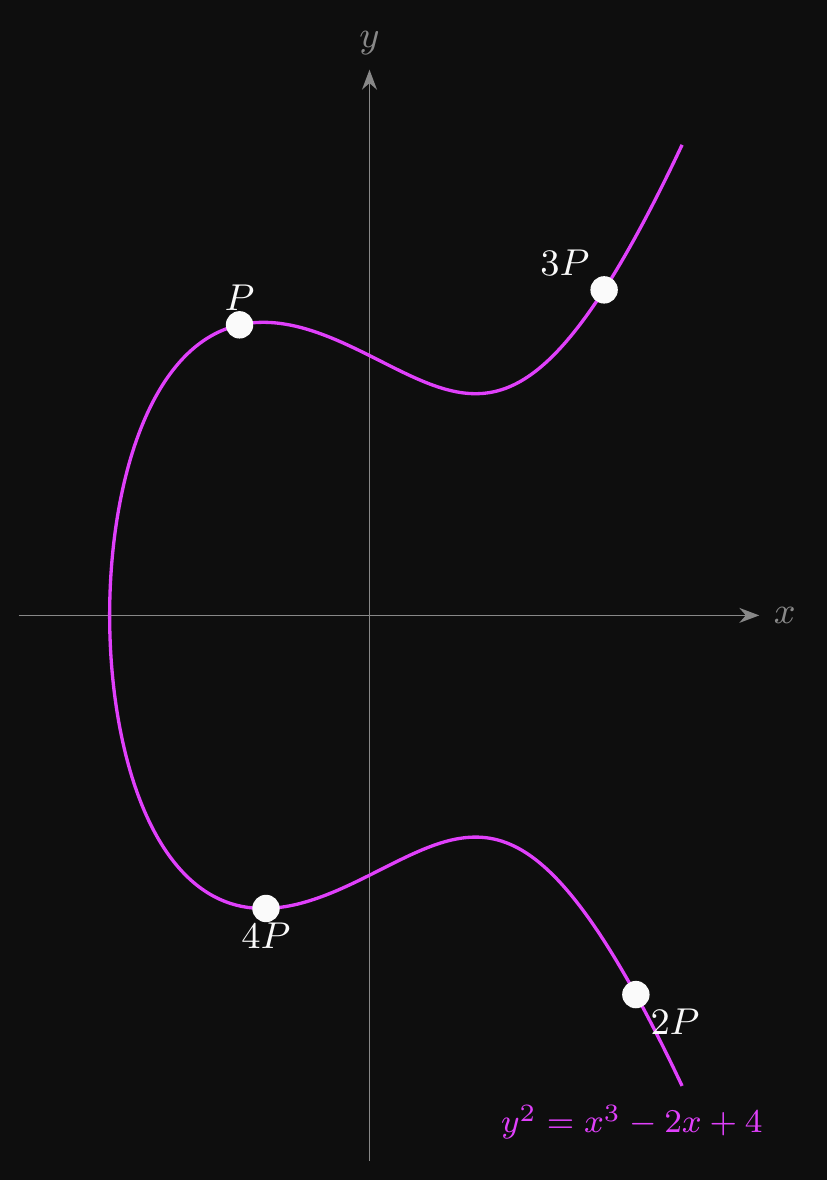

The Group Law

- Points on an elliptic curve form an abelian group under a geometric operation

- Addition \(P + Q\): draw the secant through both points, take the third intersection, reflect across the \(x\)-axis

- Doubling \(P + P\): same recipe with the tangent at \(P\) instead of a secant

- The point at infinity \(\mathcal{O}\) is the identity: \(P + \mathcal{O} = P\) for every \(P\)

- Three illustrations on the next slides, all on the same curve \(y^2 = x^3 - 2x + 4\)

Group Law: Adding Two Points

Group Law: Doubling a Point

Scalar Multiplication and ECDLP

- Scalar multiplication: \(nP = P + P + \cdots + P\) (\(n\) times)

- Efficient via double-and-add, analogous to square-and-multiply for modular exponentiation

- Computing \(nP\) given \(n\) and \(P\) costs roughly \(\log_2 n\) point operations

- Elliptic curve discrete log problem (ECDLP): given \(P\) and \(nP\), recover the integer \(n\)

- No known efficient algorithm; this is the hard problem ECC-based schemes rest on

- The multiples scatter around the curve, hiding \(n\) in the geometry

- That’s the intuition for why ECDLP is hard: \(nP\) looks nothing like \(P\) even for small \(n\)

Double-and-Add: Computing \(13P\)

\[ \begin{aligned} &\textbf{double-and-add}(P,\; n):\\ &\quad \text{write } n \text{ in binary as } (b_{k-1}\, b_{k-2}\, \ldots\, b_0)_2,\; b_{k-1} = 1 \\ &\quad R \leftarrow P \\ &\quad \textbf{for } i = k-2 \textbf{ down to } 0 \textbf{ do:} \\ &\quad\quad R \leftarrow 2R \quad \text{(double)} \\ &\quad\quad \textbf{if } b_i = 1 \textbf{ then } R \leftarrow R + P \quad \text{(add)} \\ &\quad \textbf{return } R \end{aligned} \]

Group Law: Scalar Multiplication

Why Elliptic Curves?

- The discrete log problem is harder on elliptic curves than in \(\mathbb{Z}_p^*\)

- The number field sieve (the best attack against finite-field DL) exploits how integers factor into small primes

- Elliptic curve groups have no analogous factorisation structure, so NFS does not apply

- Best known ECDL attack is Pollard’s rho: \(O(\sqrt{q})\) operations, where \(q\) is the group order. That’s all generic.

- This gives much smaller keys for the same security level

- 256-bit ECC \(\approx\) 128-bit symmetric security, versus a 3072-bit prime for finite-field DH at the same level

- Smaller keys, faster operations, less bandwidth

- A significant practical advantage for constrained environments and network protocols

- But we can’t just pick any curve. It must be specifically vetted to be secure

- Bad curve choices can introduce weaknesses

An ECC Worked Example

- Take the curve \(E: y^2 = x^3 + x + 6 \bmod 11\)

- Checking each \(x \in \{0, \ldots, 10\}\) for \(y\) solutions yields 12 affine points plus \(\mathcal{O}\)

- \((2, 4), (2, 7), (3, 5), (3, 6), (5, 2), (5, 9)\)

- \((7, 2), (7, 9), (8, 3), (8, 8), (10, 2), (10, 9)\)

- plus the point at infinity \(\mathcal{O}\)

- The group \(E(\mathbb{F}_{11})\) has 13 elements. 13 is prime, so every non-identity point generates the whole group.

- Scalar multiplication with \(P = (2, 4)\), using the chord-and-tangent formulas mod 11

- \(2P = (5, 9)\) (tangent-line doubling)

- \(3P = (8, 8)\) (chord through \(P\) and \(2P\))

- Continuing yields all 13 points before cycling back to \(\mathcal{O}\)

- Real curves use \(p \approx 2^{256}\) with similarly large prime group orders

- Discrete log is infeasible, yet point operations still run in microseconds

X25519 and Curve25519

- Curve25519 is one of the most widely used elliptic curves

- Designed by Daniel Bernstein with security and performance as primary goals

- Equation: \(y^2 = x^3 + 486662x^2 + x\) over \(\mathbb{F}_p\) where \(p = 2^{255} - 19\)

- X25519 is the Diffie-Hellman function using Curve25519

- Takes a 32-byte secret key and a 32-byte public key, outputs a 32-byte shared secret

- Used in TLS 1.3, Signal, WireGuard, SSH, and many other protocols

- Why Curve25519 over NIST curves?

- “Safe curve” criteria: no special structure that could enable attacks

- All parameters are mathematically justified, with nothing arbitrary

- NIST curves use seed parameters whose origins were never fully explained

- This mattered after the Dual EC DRBG incident (2013): a NIST-approved random number generator was shown to contain a back door, reportedly placed by the NSA. Trust in NIST-chosen parameters dropped across the industry overnight.

- For the EPIC project, X25519 is the recommended KEM in the HPKE ciphersuite

Trapdoor Functions

One-way, except for Alice.

A Second Approach to Public Keys

- DH got us key agreement from the discrete-log structure of a cyclic group

- Both parties contribute secrets, both derive the same shared value

- Security rests on the CDH assumption

- There is a different way to build public-key primitives: trapdoor functions

- Not a key-agreement protocol. A one-way function with a secret shortcut.

- Alice publishes a way to compute \(F\). Only Alice can invert it, using a trapdoor \(sk\).

- Why learn both? Because they solve different problems

- DH gives native key agreement with forward secrecy when keys are ephemeral

- TDFs give us encryption schemes (today, built on RSA) and signatures (L06, via RSA-FDH) in ways DH does not directly support

- Both frameworks show up in real protocols: TLS 1.3 uses (EC)DH for key exchange AND certificates built on RSA/ECDSA for authentication

One-Way Functions, With and Without a Trapdoor

- We’ve already met one-way functions: a hash is easy to compute, hard to invert

- Given a SHA-256 digest, no one can recover a matching preimage faster than brute force

- Plain one-way isn’t enough for public-key crypto. If no one can invert \(F\), then the intended recipient can’t decrypt either

- What we need is a trapdoor: a secret value that lets one specific party invert \(F\) while everyone else still faces a hard problem

- Intuition: think of a padlock

- Alice hands out open padlocks to anyone who asks. Anyone can snap one shut.

- Only Alice holds the key that opens them.

- Shutting the padlock is \(F(pk, \cdot)\). Opening it is \(I(sk, \cdot)\). The key is the trapdoor \(sk\).

- Let’s formalise this so we can build on it

One-Way Trapdoor Functions

- A trapdoor function scheme \(\mathcal{T}\), defined over finite sets \((\mathcal{X}, \mathcal{Y})\), is a triple of algorithms \((G, F, I)\)

- Same named-building-block pattern as ciphers \(\mathcal{E} = (E, D)\) from L01

- The key generation algorithm \(G\) is probabilistic

- Invoked as \((pk, sk) \leftarrow G()\)

- Produces a public key \(pk\) (shareable) and a secret key \(sk\) (the trapdoor)

- The forward function \(F\) is deterministic (F for “forward”, the easy direction)

- Invoked as \(y \leftarrow F(pk, x)\)

- Anyone with \(pk\) can snap the padlock: map \(x \in \mathcal{X}\) to \(y \in \mathcal{Y}\)

Trapdoor Functions: Inverse and Correctness

- The inverse function \(I\) is deterministic (I for “invert”, the hard direction without the trapdoor)

- Invoked as \(x \leftarrow I(sk, y)\)

- Only the holder of \(sk\) can open the padlock: recover \(x\) from \(y\)

- Correctness: for all \((pk, sk)\) output by \(G\) and all \(x \in \mathcal{X}\)

- \(I(sk, F(pk, x)) = x\)

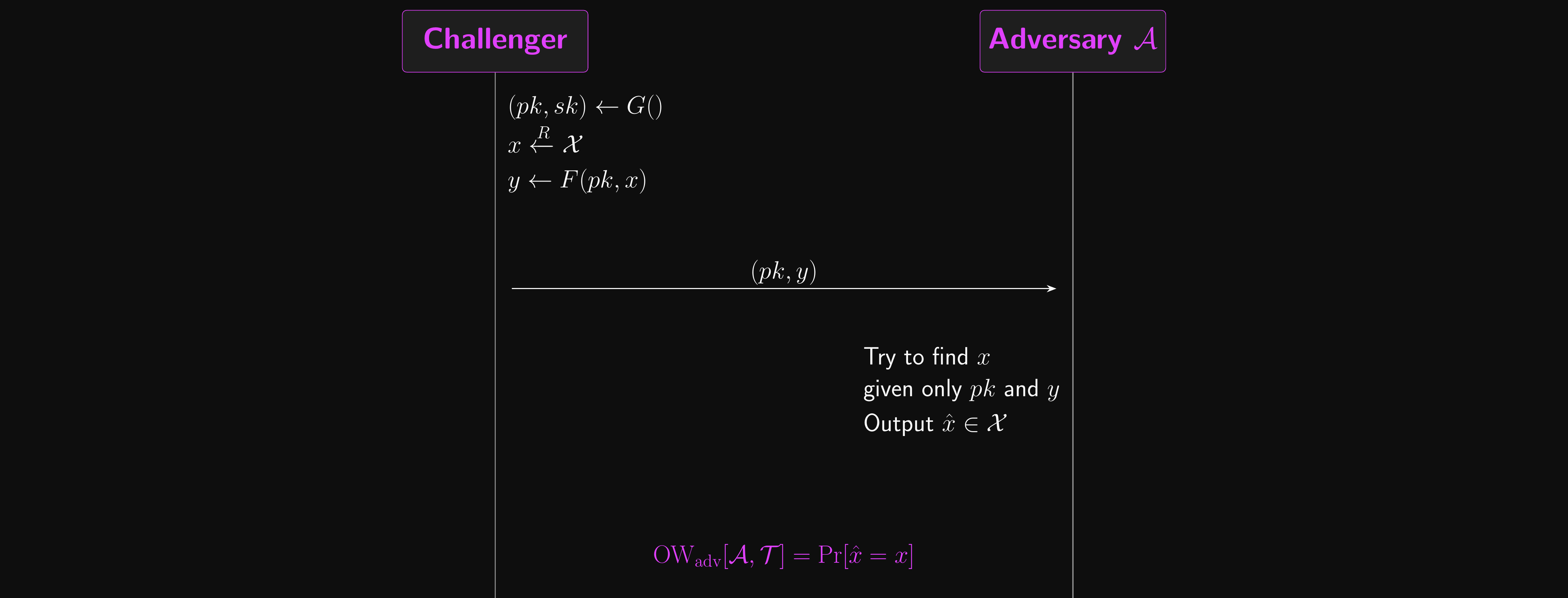

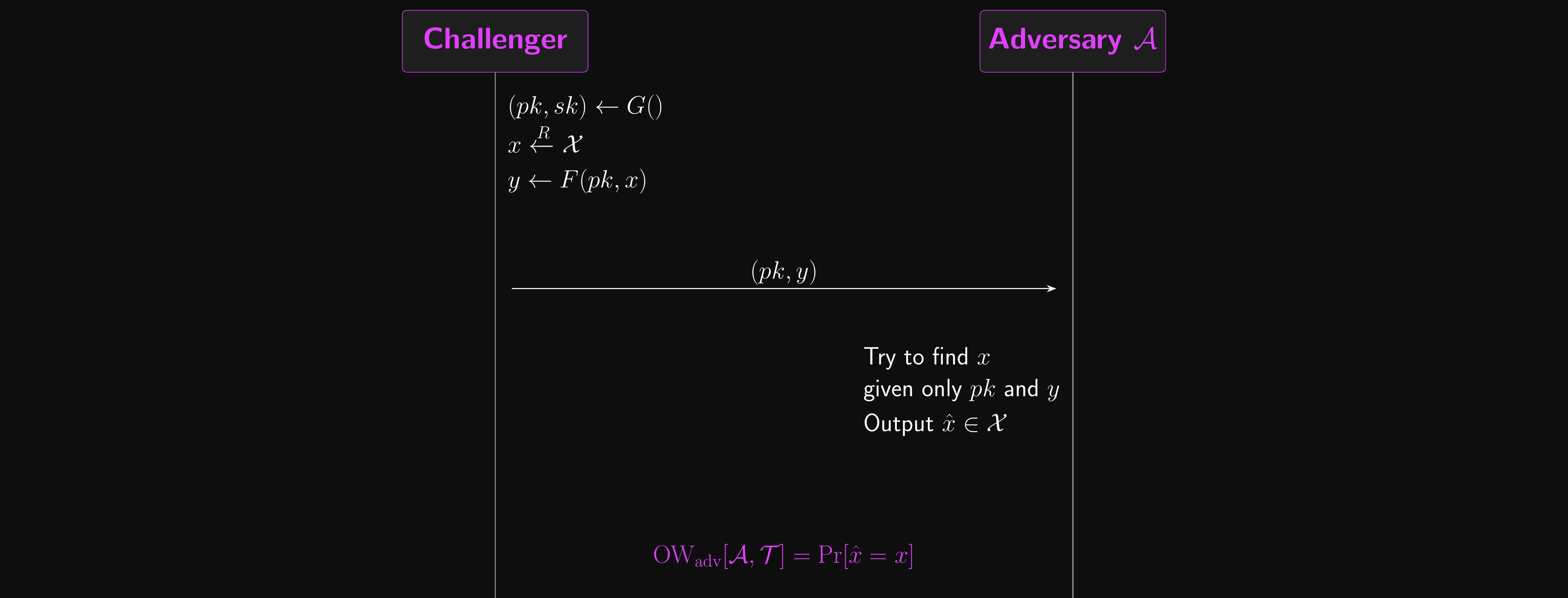

Trapdoors: Attack Game

- The challenger computes:

- \((pk, sk) \leftarrow G()\)

- \(x \xleftarrow{R} \mathcal{X}\)

- \(y \leftarrow F(pk, x)\)

- The challenger sends \((pk, y)\) to the adversary

- The adversary outputs a guess \(\hat{x} \in \mathcal{X}\)

- \(\mathcal{A}\)’s advantage in inverting \(\mathcal{T}\) is the probability that \(\hat{x} = x\)

- \(\text{OW}_\text{adv}[\mathcal{A}, \mathcal{T}] = \Pr[\hat{x} = x]\)

- A trapdoor function scheme \(\mathcal{T}\) is one-way if \(\text{OW}_\text{adv}[\mathcal{A}, \mathcal{T}]\) is negligible for every efficient adversary \(\mathcal{A}\)

- i.e. \(F\) is hard to invert even given the output and the public key

Trapdoor One-Wayness: Attack Game

Trapdoor Permutations

- The TDF definition allows \(\mathcal{X}\) and \(\mathcal{Y}\) to be different sets

- Some elements of \(\mathcal{Y}\) might not have preimages under \(F(pk, \cdot)\)

- The clean special case: \(\mathcal{X} = \mathcal{Y}\)

- Then \(F(pk, \cdot)\) is bijective, so it’s a permutation on \(\mathcal{X}\)

- Every output has exactly one preimage, no ambiguity in inversion

- We call \(\mathcal{T}\) a trapdoor permutation scheme defined over \(\mathcal{X}\)

- This is the case we care about most: RSA is a trapdoor permutation (\(\mathbb{Z}_n \to \mathbb{Z}_n\), bijective)

- Non-permutation TDFs exist but are harder to work with in practice

Key Exchange from a Trapdoor Function

- A TDF scheme \(\mathcal{T} = (G, F, I)\) gives us an alternative key exchange construction (compare with DH)

- Alice generates her keys: \((pk, sk) \leftarrow G()\), and sends \(pk\) to Bob

- Bob picks a random \(x \xleftarrow{R} \mathcal{X}\) (this will become the shared key)

- Bob applies the forward function: \(y \leftarrow F(pk, x)\), and sends \(y\) to Alice

- Alice applies the inverse function: \(x \leftarrow I(sk, y)\). Only she can do this!

- The correctness property guarantees Alice and Bob get the same result. The shared secret key is \(x\).

- The transcript consists of \(pk\) (from Alice) and \(y\) (from Bob)

- An eavesdropper gets no better chance than breaking the TDF itself

- Problem solved. If we actually had a one-way trapdoor function scheme, that is.

Key Agreement vs Key Transport

- DH and the TDF construction agree the key in different ways

- DH is key agreement: both parties contribute randomness, neither knows the key in advance

- TDF-based KE is key transport: Bob alone picks the key \(x\) and sends it encrypted to Alice

- The distinction matters operationally

- Key transport puts all the entropy on one side, then ships it under the recipient’s public key

- This is what TLS used to do with RSA-KEM, before TLS 1.3 mandated ephemeral DH

- Forward secrecy hangs on this difference

- If Alice’s long-term \(sk\) leaks, every transported \(x\) ever sent to her is recoverable from the recorded \(y\) values

- Whereas DH can throw away the per-session secrets after use, leaving nothing to subpoena

- We’ll come back to this in the Hardening section

The Reduction: TDF Security Implies KE Security

- The idea: if \(\mathcal{A}\) breaks the key exchange, we can build \(\mathcal{B}\) that breaks the TDF

- So the KE protocol is at least as hard to break as the TDF itself

- The flow inside \(\mathcal{B}\)

- TDF challenger \(\;\xrightarrow{\;(pk,\,y)\;}\;\) \(\mathcal{B}\) (the OW challenge)

- \(\mathcal{B}\) \(\;\xrightarrow{\;(pk,\,y)\;}\;\) \(\mathcal{A}\) (forwards as a “transcript”)

- \(\mathcal{A}\) \(\;\xrightarrow{\;\hat{x}\;}\;\) \(\mathcal{B}\) (returns its guess)

- \(\mathcal{B}\) \(\;\xrightarrow{\;\hat{x}\;}\;\) TDF challenger (passes the same guess back)

The Reduction: The Bound

- \(\mathcal{B}\) does essentially no work: it forwards the challenge and copies back the answer

- \(\mathcal{A}\) can’t tell the TDF challenge apart from a real protocol transcript (same distribution)

- So \(\mathcal{B}\)’s inversion probability equals \(\mathcal{A}\)’s guessing probability

- Formally: \(\text{AnonKE}_\text{adv}[\mathcal{A}, P] \leq \text{OW}_\text{adv}[\mathcal{B}, \mathcal{T}]\)

- One-way TDF \(\Rightarrow\) secure key exchange protocol

- Same reduction template as PRF\(\to\)MAC from Lecture 04

- We’ll see it again in L06 for RSA-FDH signatures, and in L07 for CCA\(\to\)AE

RSA

The one trapdoor permutation we know about.

RSA

- RSA is a trapdoor permutation: the domain and range are the same set.

- Invented by Rivest, Shamir and Adleman (hence RSA) in 1977

- That’s /rɪˈvɛst/, /ʃəˈmɪər/ and /ˈeɪdəlmən/

- This is basically the only trapdoor permutation we know about

- There are others but they are closely related or equivalent

- Rabin cryptosystem (1979) is the best-known alternative: based on squaring mod \(n\), which is provably as hard as factoring, but decryption returns four candidate plaintexts and you need extra bits to disambiguate. RSA won on ergonomics.

- RSA is arguably a simpler algorithm than most ciphers we’ve looked at.

- You don’t need anything beyond LC maths and modular arithmetic to understand it

- The forward function \(F\) and inverse function \(I\) are one-liners. Let’s see them before we worry about any of the maths.

RSA at a Glance

- Let’s see RSA in four steps before any maths gets in the way

- Pick two large primes \(p\) and \(q\) at random

- Multiply them to get the modulus \(n = pq\)

- Forward on \(x\): \(y = x^e \bmod n\) (the public exponent \(e\) is fixed, almost always 65,537)

- Inverse on \(y\): \(x = y^d \bmod n\) (the secret exponent \(d\) matches \(e\), we’ll see how)

- That’s the whole algorithm! Two primes, one modulus, two exponents that undo each other.

- Forward and inverse, not encrypt and decrypt. RSA is a trapdoor permutation; turning it into encryption needs more work, which is why the PKE section is a separate stop later in the lecture.

- We’ll spend the next few slides plugging things into this picture

- A worked example with small inputs, so we can see the whole thing in action

- Why the inverse undoes the forward (the algebra that links \(e\) and \(d\))

- The constraints we need on \(p\), \(q\), \(e\) to make the algebra hold

RSA: A Worked Example

- Pick tiny primes so we can follow by hand: \(p = 61\), \(q = 53\), \(e = 17\)

- Setting up the keys

- Modulus: \(n = 61 \times 53 = 3233\)

- Handy product: \((p-1)(q-1) = 60 \times 52 = 3120\) (next slide explains why this matters)

- Decryption exponent: \(d = e^{-1} \bmod 3120 = 2753\) (extended Euclidean, linear time)

- Sanity check: \(17 \times 2753 = 46801 = 15 \times 3120 + 1\), so \(ed \equiv 1 \pmod{3120}\)

- Public key \((n, e) = (3233, 17)\). Secret key \((n, d) = (3233, 2753)\).

- Forward on \(x = 65\): \(\;y = 65^{17} \bmod 3233 = 2790\)

- Inverse on \(y = 2790\): \(\;x = 2790^{2753} \bmod 3233 = 65\). Back to the original!

- Real RSA uses 1024-bit primes for a 2048-bit modulus. Same algorithm, bigger numbers.

Why RSA Works

- We’ve seen the recipe. Now the algebra: why does raising to \(d\) undo raising to \(e\)?

- We need to show \(m^{ed} \equiv m \pmod n\) for every \(m \in \mathbb{Z}_n\). Two steps.

- First, a reminder of Euler’s theorem from your in-depth first-year study of number theory, which you all of course recall vividly. For any \(x\) coprime to \(n\),

- \(x^{\phi(n)} \equiv 1 \pmod n\)

- \(\phi(n)\) is the Euler totient: the count of integers in \(\{1, \ldots, n\}\) coprime to \(n\). For \(n = pq\), \(\phi(n) = (p-1)(q-1)\), exactly the “handy product” we saw in the worked example.

- We’ll take Euler’s theorem on faith here; it’s the only number-theory result we need.

RSA Correctness: The Proof

- Step 1: the exponents cancel mod \(\phi(n)\). By construction of \(d\),

- \(d \leftarrow e^{-1} \bmod \phi(n)\)

- so \(ed \equiv 1 \pmod{\phi(n)}\), which means \(ed = 1 + k\phi(n)\) for some integer \(k\)

- Step 2: Euler’s theorem kills the extra factor. For any \(m\) with \(\gcd(m, n) = 1\),

- \(m^{ed} = m^{1 + k\phi(n)} = m \cdot (m^{\phi(n)})^k \equiv m \cdot 1^k \equiv m \pmod n\)

- Key takeaway: RSA’s correctness isn’t magic. The choice \(d = e^{-1} \bmod \phi(n)\) is exactly what forces the exponents to cancel under Euler.

RSA: Choosing the Pieces Safely

- The algebra works provided \(p\), \(q\) and \(e\) satisfy a few conditions. Each one falls right out of the proof we just saw.

- \(\gcd(e, p-1) = 1\) and \(\gcd(e, q-1) = 1\)

- We need \(e^{-1} \bmod \phi(n)\) to exist, otherwise \(d\) doesn’t even make sense

- An \(e\) sharing a factor with \(\phi(n)\) has no inverse, and RSA breaks before it starts

- \(q \neq p\)

- If \(p = q\) then \(n = p^2\), which factors trivially as \(\sqrt{n}\), and the security collapses

- The Euler argument would also fall apart: \(\phi(p^2) = p(p-1) \neq (p-1)^2\)

- \(|p - q|\) should not be small (real implementations enforce this)

- Fermat’s factoring method factors \(n\) in time \(O(|p - q|)\)

- Typical rejection threshold: reject \(q\) if \(|p - q| < 2n^{1/4}\)

- Heninger 2012 found real-world keys where this check had been skipped.

What You Don’t Need: Strong Primes

- Older RSA literature demanded strong primes: specific structure on \(p \pm 1\) to block Pollard \(p-1\), Williams \(p+1\), and ECM

- This was a real constraint at 512- and 768-bit moduli (1990s deployments), where these special-purpose attacks were inside the threat model

- Above 1024-bit primes (so 2048-bit moduli), the rule no longer applies

- The general number field sieve now dominates every special-purpose factoring attack the strong-prime conditions were designed to thwart

- Sample \(p\) and \(q\) uniformly at random; the historical structural requirements are obsolete

- This is the opposite of the DH safe-prime story

- DH safe primes remain mandatory at every size, because DH operates in a group whose order is \(p-1\) (composite, attackable via Pohlig-Hellman)

- RSA operates mod \(n\), where factoring is the only known attack and small-order subgroups don’t apply

- Different primitive, different parameter discipline. Don’t carry rules across.

RSA: Key Generation

- The canonical \(\text{RSAGen}(\ell, e)\) algorithm. Probabilistic, two system parameters

- \(\ell\) is half the target modulus bit length (e.g. \(\ell = 1024\) for a 2048-bit modulus)

- \(e \geq 3\) is a fixed odd public exponent (almost always 65,537)

- The algorithm itself

- Sample a random \(\ell\)-bit prime \(p\) with \(\gcd(e, p - 1) = 1\)

- Sample a random \(\ell\)-bit prime \(q \neq p\) with \(\gcd(e, q - 1) = 1\)

- Set \(n \leftarrow pq\)

- Compute \(d \leftarrow e^{-1} \bmod (p-1)(q-1)\)

- Return public key \((n, e)\) and secret key \((n, d)\)

- Building blocks: Miller-Rabin for prime sampling, extended Euclidean for inverting \(e\) mod \(\phi(n)\)

RSA: Terminology

- \(n\) is the RSA modulus

- Everything happens in \(\mathbb{Z}_n\). At least 2048 bits (\(\ell \geq 1024\)); some orgs require 4096.

- \(e\) is the public exponent (a.k.a. encryption exponent)

- RSA encryption is \(x^e \bmod n\). Almost universally \(e = 2^{16} + 1 = 65{,}537\) (next slide).

- \(d\) is the private exponent (a.k.a. decryption exponent)

- RSA decryption is \(y^d \bmod n\)

- The public and secret keys aren’t independent

- \(d\) is constructed from \(e\), \(p\), \(q\) via \(d = e^{-1} \bmod \phi(n)\)

- RSA’s security: given just \((n, e)\), how hard is it to recover \(d\)? If easy, broken. If hard, RSA holds.

Why 65,537?

- Every real-world RSA public key you will ever see has \(e = 65{,}537\) [we devoutly hope]. This is not arbitrary.

- It is a Fermat prime: \(65{,}537 = 2^{2^4} + 1 = 2^{16} + 1\)

- In binary:

1 0000 0000 0000 0001. Only two bits are set. - Square-and-multiply computes \(x^e\) using 16 squarings plus 1 multiplication = 17 operations total

- Encryption is cheap; this matters when a TLS server encrypts thousands of handshakes per second

- In binary:

- It is large enough to avoid low-exponent attacks

- \(e = 3\) used to be common and enables Håstad’s broadcast attack: the same \(m\) encrypted to three recipients with \(e = 3\) lets an attacker recover \(m\) via CRT and a cube root (no factoring needed)

- \(e = 65{,}537\) is far above the threshold where these attacks apply to any plausible message count

- It is small enough that encryption is still cheap. Decryption (using \(d\)) costs \(O(\log d)\) multiplications, which is expensive no matter what. Keeping \(e\) small keeps the encryption side fast while the signer/decrypter eats the cost.

- This is the canonical interview question.

RSA as a Trapdoor Permutation

- Let’s plug RSA into the TDF scheme \(\mathcal{T}_\text{RSA} = (G, F, I)\) from earlier

- Key generation \(G\): run \(\text{RSAGen}\), set \(pk = (n, e)\) and \(sk = (n, d)\)

- Forward function \(F(pk, x) = x^e \bmod n\)

- Inverse function \(I(sk, y) = y^d \bmod n\)

- Domain \(=\) range \(=\mathbb{Z}_n\), so \(F(pk, \cdot)\) is genuinely a permutation, not just any TDF

- Factoring breaks RSA, but the converse is open

- If you can factor \(n\), you recover \(p\) and \(q\), compute \(\phi(n)\), and invert \(e\) mod \(\phi(n)\) to recover \(d\)

- Does breaking RSA require factoring? Unknown! The RSA problem is a hardness assumption, not a theorem

- At 2048+ bits the best classical factoring algorithm (the general number field sieve) is currently infeasible

RSA Keys in Practice

- Secret keys store more than \((n, d)\)

- Real RSA private keys hold \((p, q, d_p, d_q, q^{-1} \bmod p)\), where \(d_p = d \bmod (p-1)\) and \(d_q = d \bmod (q-1)\)

- Decryption uses the Chinese Remainder Theorem: compute \(y^{d_p} \bmod p\) and \(y^{d_q} \bmod q\), then combine

- About \(4\times\) faster than computing \(y^d \bmod n\) directly.

- This is what’s actually in a real PKCS#1 private key file. Open one sometime and have a look.

- Two more tweaks that real implementations bake in

- Use Carmichael’s \(\lambda(n) = \text{lcm}(p-1, q-1)\) instead of \(\phi(n)\) to get a smaller \(d\)

- Add constant-time blinding to defeat timing side-channel attacks on the exponent

- Both are in the speaker notes if you want the details. Neither changes the algebra we just walked through.

RSA: Attack Game

- The one-way TDF attack game from earlier, specialised to RSA

- Both sides start with parameters \((\ell, e)\). The challenger computes \((n, d) \leftarrow \text{RSAGen}(\ell, e)\), samples \(x \xleftarrow{R} \mathbb{Z}_n\), and sets \(y = x^e \bmod n\)

- Challenger sends \((n, y)\) to the adversary. Adversary outputs \(\hat{x}\).

- \(\text{RSA}_\text{adv}[\mathcal{A}, \ell, e] = \Pr[\hat{x} = x]\)

- The RSA assumption: this advantage is negligible for every efficient adversary at appropriate \(\ell\)

- \((n, y)\) is an instance of the RSA problem; \(x\) is its solution

- Factoring \(n\) would let an adversary compute \(\phi(n)\), then \(d\), then \(x\). So factoring breaks RSA.

- Whether RSA can be broken without factoring is an open problem

Weak Keys in Practice: Heninger 2012

- The RSA assumption requires \(p\) and \(q\) drawn uniformly at random. Real deployments forget.

- Heninger et al. “Mining Your Ps and Qs” (2012) scanned the entire IPv4 space for TLS and SSH keys

- 0.50% of TLS hosts and 0.03% of SSH hosts had identical or trivially related keys

- 0.03% of TLS hosts and 1.06% of SSH hosts could be outright factored

- The mechanism: embedded devices generated keys at first boot before the kernel had enough entropy

- Two devices from the same vendor often shared one prime but had different other primes

- \(n_1 = p q_1\) and \(n_2 = p q_2\) both contain \(p\), so \(\gcd(n_1, n_2) = p\) in microseconds. Both keys factored.

- Pairwise GCDs across millions of collected moduli factored hundreds of thousands of keys in hours

- Lesson: the RSA assumption is only as strong as the randomness in keygen

- Same shape as Logjam for DH: primitive fine, deployment broke

- See also: Debian OpenSSL bug (2008, \(\approx\) 32k possible keys), ROCA (2017, Infineon smartcards)

Public Key Encryption

Spoiler: textbook RSA isn’t it.

Defining Public-Key Encryption

- We have RSA as a trapdoor permutation. The TDF\(\to\)KE reduction gives us RSA-based key exchange.

- But can RSA encrypt a message directly? Before answering, let’s pin down what a public-key encryption scheme is, exactly.

- Bob runs a probabilistic key generation algorithm in advance, publishing \(pk\) and keeping \(sk\)

- Alice encrypts a message \(m\) using Bob’s \(pk\) to get a ciphertext \(c\)

- Bob decrypts \(c\) using his \(sk\) to recover \(m\)

- Different keys for encryption and decryption: this is also called asymmetric encryption

- Now let’s nail down the algorithms and the security definition before we ask whether RSA fits the bill.

Public Key Encryption: Definition

- A public-key encryption scheme \(\mathcal{E} = (G, E, D)\) is a triple of efficient algorithms

- Key generation \(G\): probabilistic, outputs \((pk, sk) \leftarrow G()\)

- Public key \(pk\), secret key \(sk\)

- Encryption \(E\): probabilistic, invoked as \(c \leftarrow E(pk, m)\)

- Input: public key \(pk\), message \(m\). Output: ciphertext \(c\)

- Decryption \(D\): deterministic, invoked as \(m \leftarrow D(sk, c)\)

- Input: secret key \(sk\), ciphertext \(c\). Output: message \(m\) or a special

rejectsymbol

- Input: secret key \(sk\), ciphertext \(c\). Output: message \(m\) or a special

- Correctness requirement: decryption must undo encryption

- For all \((pk, sk)\) output by \(G\) and all messages \(m\)

- \(\Pr[D(sk, E(pk, m)) = m] = 1\)

- For all \((pk, sk)\) output by \(G\) and all messages \(m\)

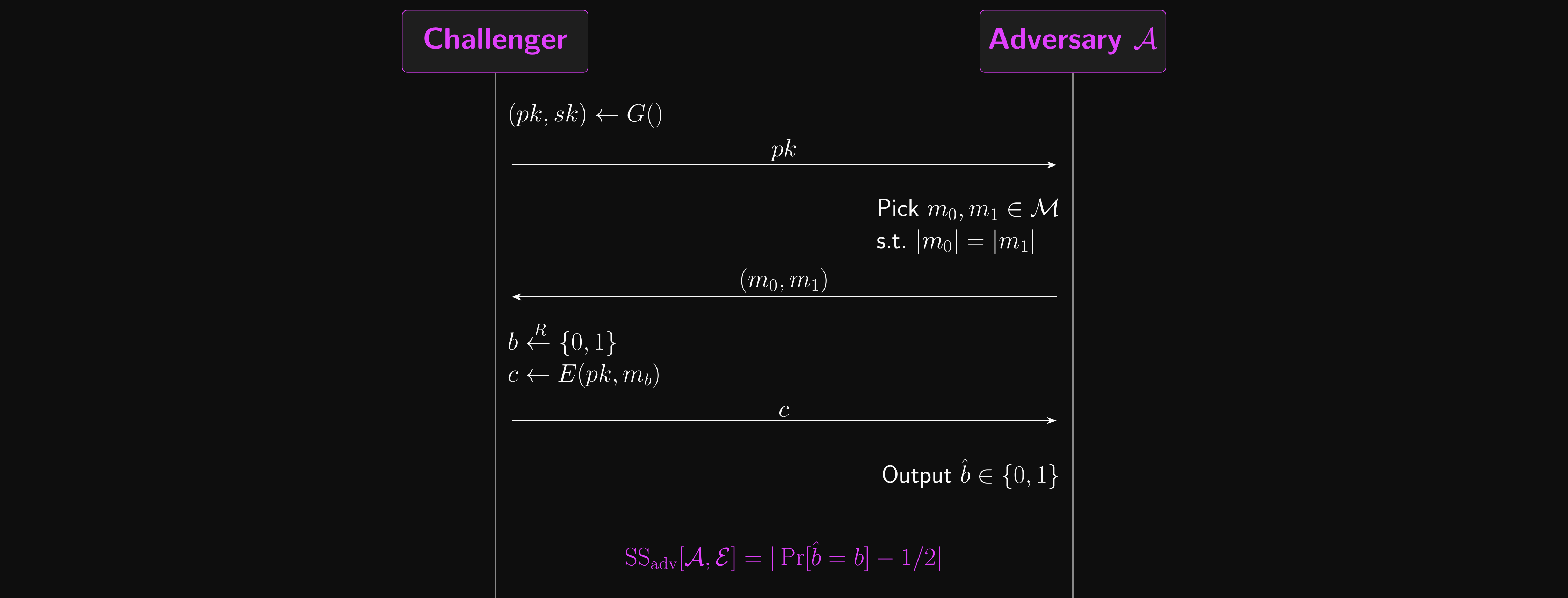

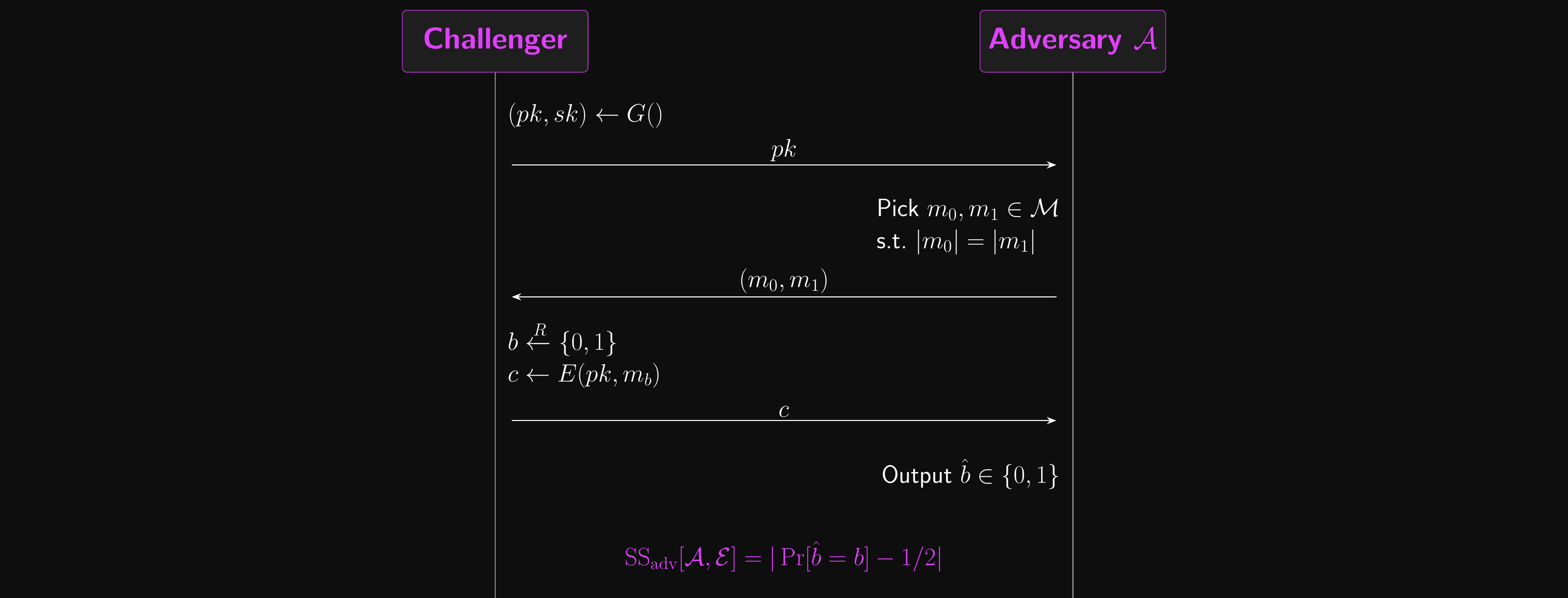

Public Key Encryption: Attack Game

- \(\mathcal{E}\) is semantically secure if no efficient adversary can distinguish encryptions of any two equal-length messages

- Two experiments, \(b \in \{0, 1\}\), mirroring the symmetric SS game from L01

- The challenger generates \((pk, sk) \leftarrow G()\) and sends \(pk\) to the adversary \(\mathcal{A}\)

- \(\mathcal{A}\) selects two messages \(m_0, m_1\) of equal length, sends both to the challenger

- In experiment \(b\), the challenger sends \(c \leftarrow E(pk, m_b)\)

- \(\mathcal{A}\) outputs a guess \(\hat{b}\)

- \(\mathcal{A}\)’s advantage: \(\text{SS}_\text{adv}[\mathcal{A}, \mathcal{E}] = |\Pr[\hat{b} = b] - 1/2|\)

- \(\mathcal{E}\) is secure if this is negligible for every efficient \(\mathcal{A}\)

Public Key Encryption: Attack Game

PKE Must Be Randomised

- Here’s the key observation: the adversary holds \(pk\), so they can encrypt anything they like

- Given the challenge \(c\), they compute \(E(pk, m_0)\) and \(E(pk, m_1)\) themselves and compare

- If \(E\) is deterministic, exactly one matches \(c\) and the adversary wins trivially with advantage 1

- So any semantically secure PKE scheme must be randomised

- Every call to \(E(pk, m)\) must draw fresh internal randomness

- Encrypting the same \(m\) twice should yield different ciphertexts

- This is not a design choice. It is forced by the game structure.

- Bonus: semantic security here automatically implies CPA security

- The adversary can already encrypt anything they want, so an encryption oracle adds nothing

- Unlike symmetric encryption, where CPA was a separate property we had to prove

Attempt 1: Raw RSA Fails

- The 1977 paper proposed textbook RSA: encrypt as \(c = m^e \bmod n\), decrypt as \(m = c^d \bmod n\)

- It is deterministic. We just proved no deterministic PKE can be IND-CPA-secure.

- Adversary submits \(m_0, m_1\), receives \(c = m_b^e \bmod n\)

- Adversary computes \(m_0^e \bmod n\) themselves (they have \(pk\)) and compares. Advantage \(= 1\).

- This is structural, not a usage bug. The trapdoor permutation has nowhere to hide which message was encrypted.

Attempt 2: Just Add Random Padding?

- The obvious fix: pad the message with random bytes before RSA-encrypting

- Encrypt \(m' = \text{pad}(m, r)\) where \(r\) is fresh random per encryption

- Different \(r\) each time means different ciphertext, so the Attempt 1 distinguisher fails

- PKCS#1 v1.5 (1993) did exactly this and shipped everywhere

- SSL 2.0/3.0, early TLS, S/MIME, X.509 certificates

- In 1998, Bleichenbacher broke it

- The padding format had a specific structure (leading bytes

0x00 0x02) - A server that reveals anything about whether decryption returned valid padding leaks one bit per query

- Around \(10^6\) carefully crafted queries decrypt a single captured ciphertext

- No key recovery needed. No factoring. Just exploit the padding oracle.

- The padding format had a specific structure (leading bytes

- The lesson: ad-hoc randomness is not enough. You need the randomisation to be bound into the security proof.

Attempt 3: OAEP

- RSA-OAEP (Optimal Asymmetric Encryption Padding) is the real fix

- Randomises the encoding using a hash function, with a specific structure that’s provably CCA-secure in the ROM

- Standardised in PKCS#1 v2 / RFC 8017, used by S/MIME, JOSE/JWE, and modern IoT and smartcard stacks

- Note: TLS RSA key exchange (1.2 and earlier) used PKCS#1 v1.5, not OAEP; TLS 1.3 removed RSA key exchange entirely

- We’ll define OAEP properly in Lecture 06 once CCA security and the ROM are on the table

- RSA encryption padding and RSA signature padding are not the same thing

- RSA-OAEP is for encryption (what we care about here)

- RSA-PSS is for signatures (Probabilistic Signature Scheme; also L06)

- Don’t mix them up. Libraries that call both “RSA padding” have caused production incidents.

- The takeaway: textbook RSA is a trapdoor permutation, not an encryption scheme

- To turn it into an encryption scheme we need careful randomisation AND a CCA-style security argument

- Same pattern as MAC composition in L04: the primitive is fine, the construction around it does the work

RSA in 2026: Where It Still Lives

- RSA is not dead. It is also not the right tool for new code.

- Still RSA today

- TLS certificate roots (slowly migrating; CA/B Forum still permits RSA-2048)

- JWT signing (

RS256is the default in most JWT libraries and most OIDC providers) - Code signing: Windows Authenticode, macOS codesigning, sigstore/cosign fallback

- Legacy HSMs, PKCS#11 smartcards, YubiKey PIV slot, Estonian/Latvian national ID cards

- The common thread: long-lived deployments where rotation is expensive and the install base predates ECC defaults

- If you are reading old code and it uses RSA, that’s fine. Migrate when you have the budget.

RSA in 2026: Where to Use ECC Instead

- Switch to ECC for new work

- TLS leaves: ECDSA (P-256) or Ed25519 beat RSA on performance and bandwidth

- SSH host/user keys: Ed25519 is the default since OpenSSH 6.5 (2014)

- Messaging and protocol design: X25519 + Ed25519 (Signal, WireGuard, age, Noise)

- Constrained devices: 256-bit ECC keys vs 3072-bit RSA keys is a factor of 12 on the wire

- Never reach for RSA first in 2026

- Greenfield key exchange: X25519, not RSA-KEM

- Greenfield signatures: Ed25519, not RSA-PSS

- Post-quantum: neither RSA nor ECC; ML-KEM + ML-DSA (see Quantum Threat slide)

- Rule of thumb for your career: if you are writing new code and reaching for RSA, stop and ask why. There is almost always a better option.

PKE from Trapdoor Functions

- We can build a public-key encryption scheme \(\mathcal{E}_\text{TDF}\) from any TDF \(\mathcal{T} = (G, F, I)\) and a hash function \(H\)

- Encrypt \((pk, m)\):

- \(x \xleftarrow{R} \mathcal{X}\)

- \(y \leftarrow F(pk, x)\)

- \(k \leftarrow H(x)\)

- \(c \leftarrow E_s(k, m)\) [symmetric encryption]

- output \((y, c)\)

- Decrypt \((sk, (y, c))\):

- \(x \leftarrow I(sk, y)\)

- \(k \leftarrow H(x)\)

- \(m \leftarrow D_s(k, c)\) [symmetric decryption]

- output \(m\)

PKE from TDF: Why It’s Secure

- This construction is IND-CPA-secure if \(\mathcal{T}\) is one-way and \(H\) is modelled as a random oracle

- First proven-secure PKE scheme we’ve seen in the course

- Unlike textbook RSA (broken), \(\mathcal{E}_\text{TDF}\) has a real security theorem

- Security reduces to TDF one-wayness: same reduction template as TDF\(\to\)KE from earlier, reused with a symmetric-encryption wrapper

- The random \(x\) provides the randomisation that textbook RSA lacks

- The TDF hides \(x\); the hash \(H\) extracts a symmetric key from \(x\); then symmetric encryption handles the message

- In L06 we’ll see RSA-OAEP: a more careful version of this idea that also achieves CCA security

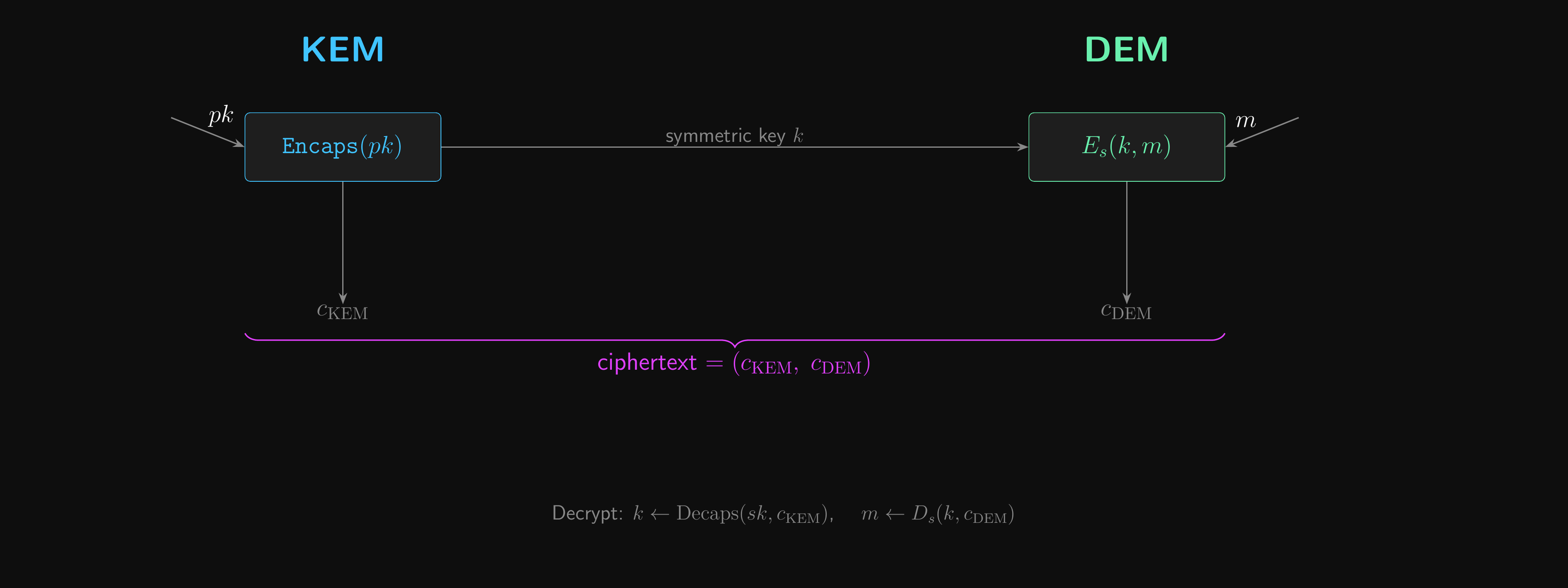

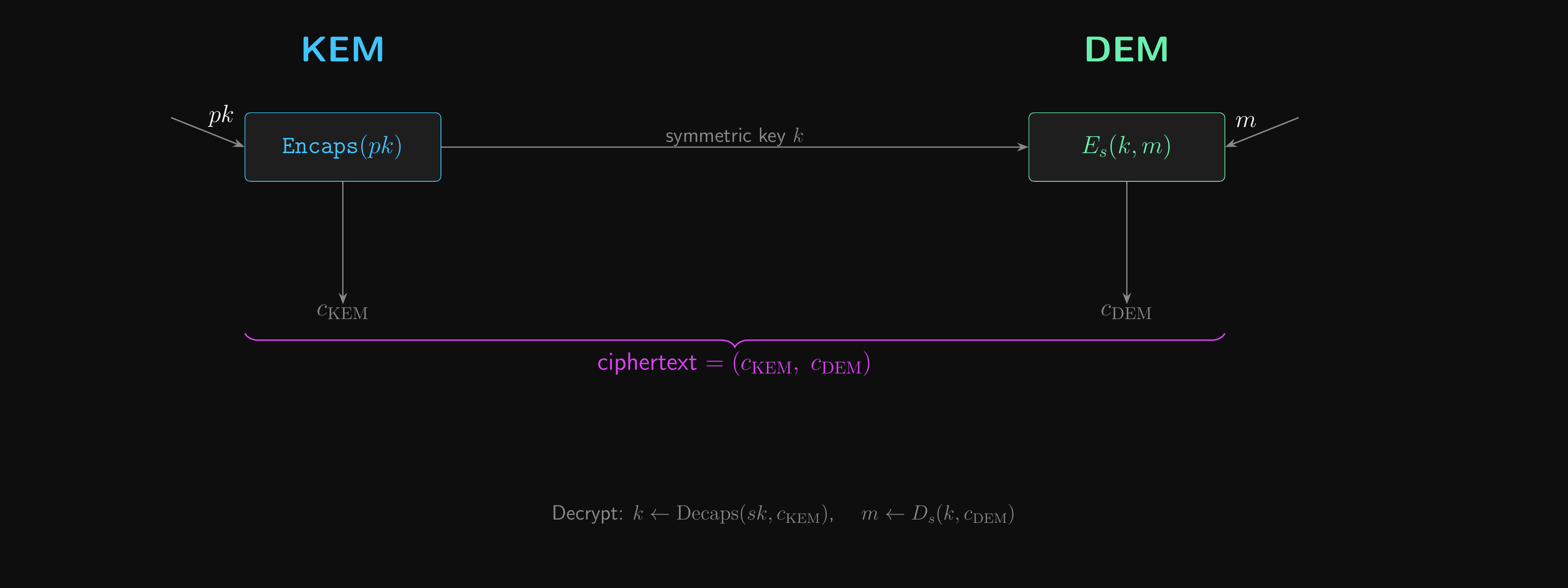

Hybrid Cryptosystems

- \(\mathcal{E}_\text{TDF}\) already reveals the pattern: public-key crypto handles a short secret; symmetric crypto handles the data

- After that, symmetric encryption takes over for the bulk of the work

- This concept is formalised by the idea of a key encapsulation mechanism (KEM)

- The KEM generates and encrypts a random symmetric key in one step

- In \(\mathcal{E}_\text{TDF}\), the \((x, y)\) pair is exactly a KEM!

- Instead of encrypting a message directly, we encrypt a random key (used for symmetric encryption) to send to the other party

- This gives a clean separation of concerns in a hybrid cryptosystem

- KEM handles key distribution

- Data encapsulation scheme (symmetric cipher) handles data encryption

- Pretty much every popular use of public key encryption follows this pattern.

- TLS, SSH, OpenPGP and HPKE (RFC 9180)

KEM/DEM Hybrid Encryption

From Secret Material to a Session Key

- Both DH and RSA-KEM hand us a secret blob, not a usable symmetric key

- DH: a group element \(w = g^{\alpha\beta}\), distribution biased by \(\mathbb{G}\)

- RSA-KEM (and \(\mathcal{E}_\text{TDF}\)): a random \(x \in \mathbb{Z}_n\), also not uniform bits

- Either way, you cannot just pass it to AES

- A key derivation function (KDF) turns secret material into uniform keying material

- The standard choice is HKDF (RFC 5869): extract-then-expand, built from HMAC

- Used in TLS 1.3, HPKE, Signal, WireGuard. We’ll build it properly in L07.

- Treat \(K \leftarrow \text{KDF}(w)\) or \(K \leftarrow \text{KDF}(x)\) as a black box for now

- Every time we use a KEM or DH output as a key in this lecture, this step is implicit

- Password-based KDFs (PBKDF2, Argon2id) are a different tool for low-entropy inputs; also L07

Hardening the Key Exchange

What if your key leaks five years from now?

Three Attempts at a Real Protocol

- Plain DH assumes a passive eavesdropper. Real adversaries are active and keys eventually leak.

- We’ll iterate toward a protocol that survives both

- Same cascade structure we used for HMAC in Lecture 04: each naive attempt fails in a specific way, and the failure tells us which property we needed

- Attempt 1: Static keys. Alice and Bob reuse long-term DH public keys

- Gets us authentication (if the public keys are distributed via PKI)

- Breaks forward secrecy: one key leak exposes every past session

- Attempt 2: Anonymous ephemeral DH. Fresh key pair per session

- Gets us forward secrecy: nothing left to subpoena

- Breaks authentication: man-in-the-middle walks right in

- Attempt 3: Authenticated ephemeral DH. Long-term keys for identity, ephemeral keys for secrecy

- Both properties at once. The construction is 3DH.

- Let’s walk each failure, then land on the construction

Attempt 1: Static Keys

- Alice and Bob publish long-term (static) DH public keys \(A = g^a\) and \(B = g^b\) in a PKI

- Naming aside: Boneh & Shoup and most RFCs say long-term, Signal says identity (\(IK\)). Same thing.

- Every session between them derives \(K = g^{ab}\)

- Authentication comes for free from the PKI: \(A\) is provably Alice’s

- What breaks? Forward secrecy.

- If \(a\) or \(b\) ever leaks (backup stolen, server breached, subpoena served), every past session is exposed

- An adversary who recorded ciphertexts for the past year decrypts all of it

- This is the classical harvest now, decrypt later threat model; not hypothetical

- Forward secrecy is the property we want: compromise of long-term keys does not compromise past session keys

- This requires ephemeral keys: fresh key pairs generated per session

- After the session ends, the ephemeral secret is destroyed. There is nothing left to leak.

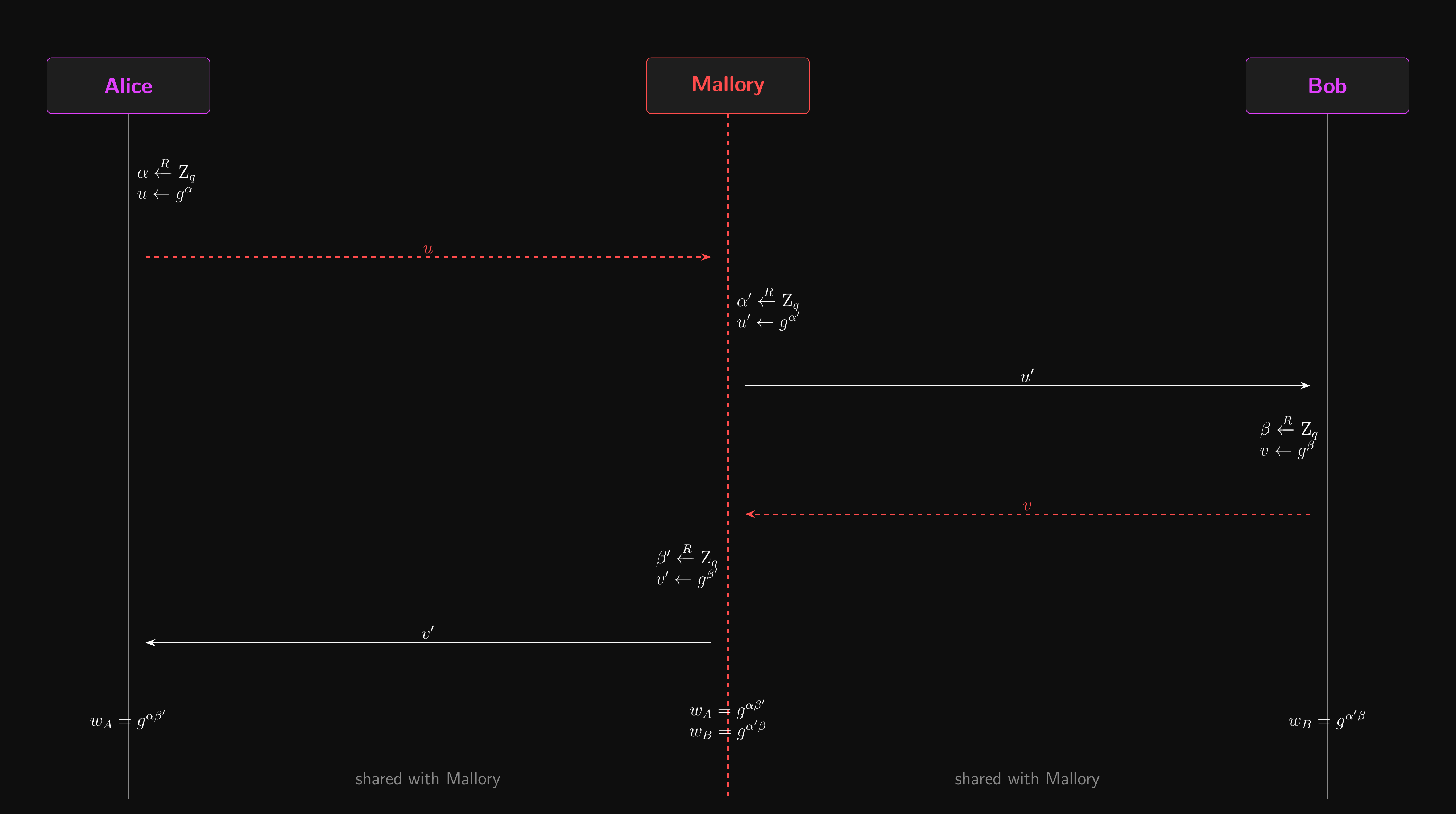

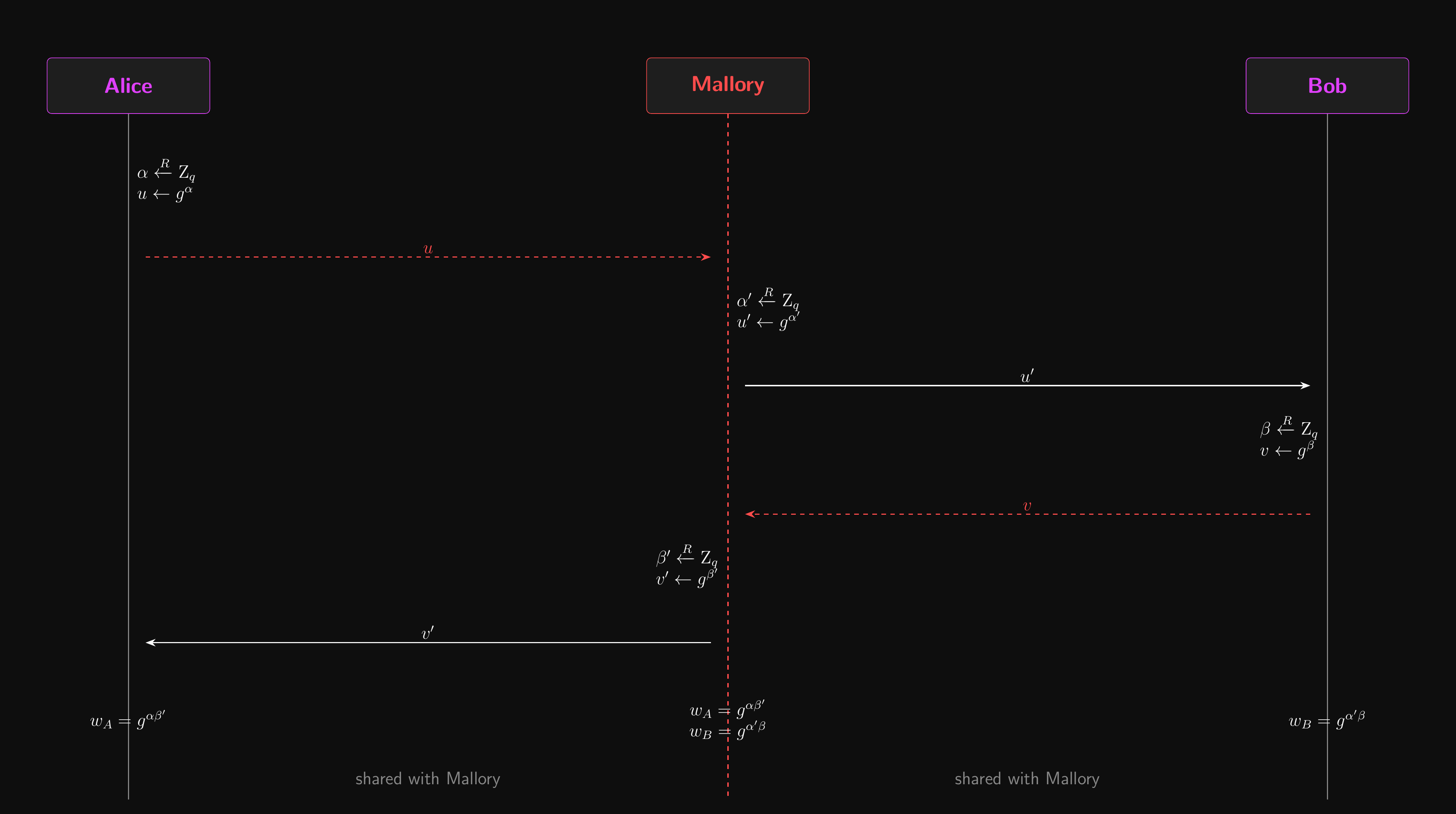

Attempt 2: Anonymous Ephemeral DH

- Fix Attempt 1 by generating fresh key pairs per session

- Alice: new \(\alpha\), send \(g^\alpha\). Bob: new \(\beta\), send \(g^\beta\). Derive \(K\) as usual.

- Destroy \(\alpha\) and \(\beta\) after the session. No long-term key to leak, forward secrecy achieved.

- What breaks? Authentication. The protocol is anonymous by construction.

- Mallory sits between Alice and Bob and runs the protocol twice

- With Alice, Mallory impersonates Bob and they agree on a key \(K_\text{AM}\)

- With Bob, Mallory impersonates Alice and they agree on a different key \(K_\text{MB}\)

- Mallory relays messages, re-encrypting with the appropriate key. Alice and Bob never notice.

- This fails instantly against an active attacker because there is nothing to tie \(g^\alpha\) to Alice

- Forward secrecy is worthless if the adversary reads everything in real time

Man-in-the-Middle Attack

Why Authentication Needs a Trust Anchor

- Attempt 2 failed because nothing tied \(g^\alpha\) to Alice specifically

- We need to bind a key to an identity, not just exchange a key

- Public keys on real websites come signed by a certificate authority (CA)

- The CA’s signature binds “this public key” to “this domain name”

- Your browser verifies that signature using the CA’s own public key, which your OS ships pre-installed

- Note that we’re verifying who they are, not whether they’re trustworthy!

- downloadmoreram.com is unlikely to give you more RAM, legit signature or not

- We’ll build signatures and PKI properly in L06

- For this section, we’ll assume authenticated identity keys exist and carry on

Attempt 3: Authenticated Ephemeral DH

- Attempt 1 gave us authentication but no forward secrecy. Attempt 2 gave us forward secrecy but no authentication. We need both.

- The fix: two key pairs per party

- A long-term (static) identity key pair, published in a PKI, provides authentication

- A fresh ephemeral key pair per session, destroyed after use, provides forward secrecy

- Mix them with multiple DH operations so that the session key depends on both

- If an adversary wants the session key, they need access to the ephemeral secrets (which no longer exist) AND the long-term secrets

- Even a future compromise of the long-term key cannot reconstruct past session keys

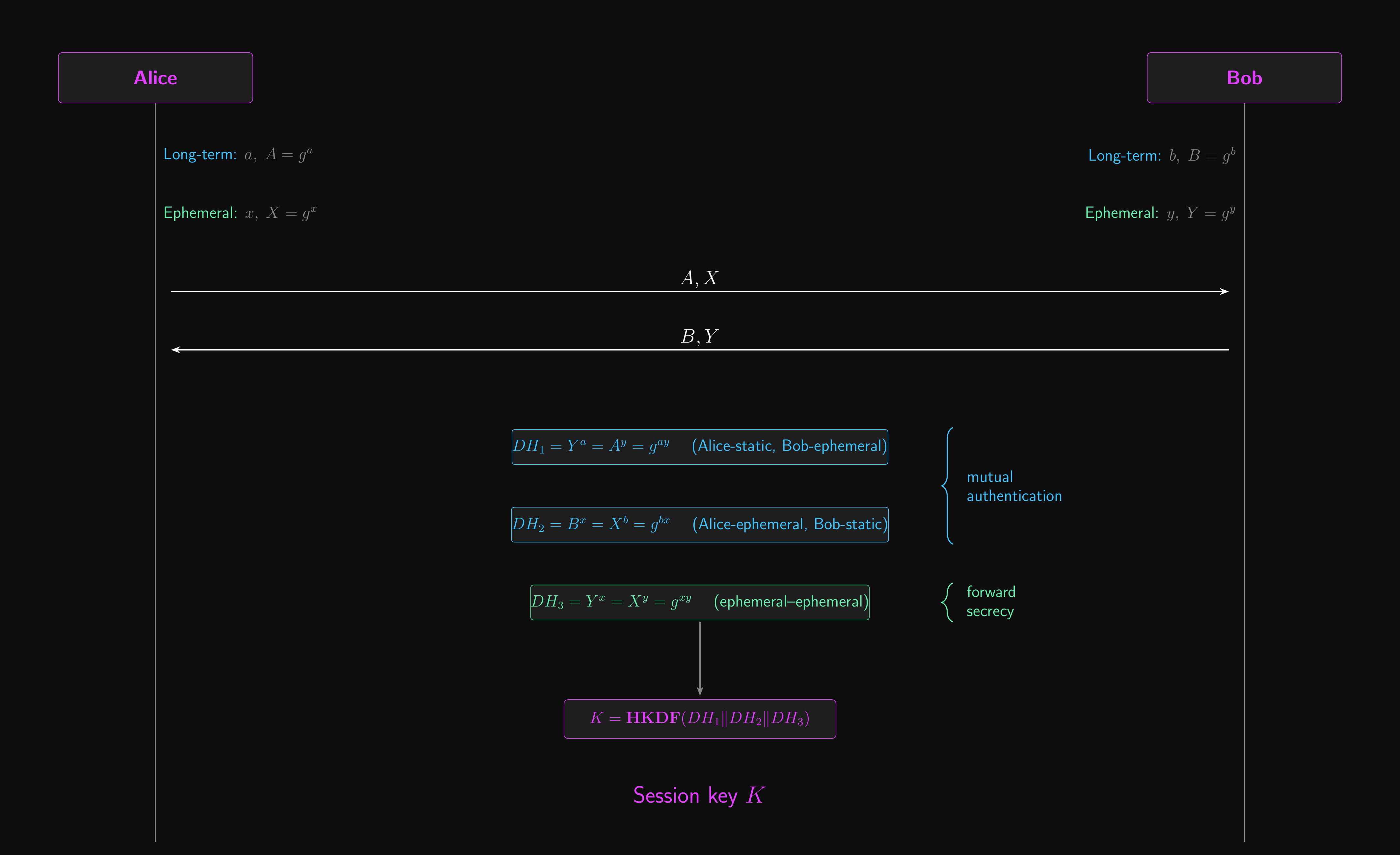

- The standard construction is Triple Diffie-Hellman (3DH): three DH operations combined through a KDF

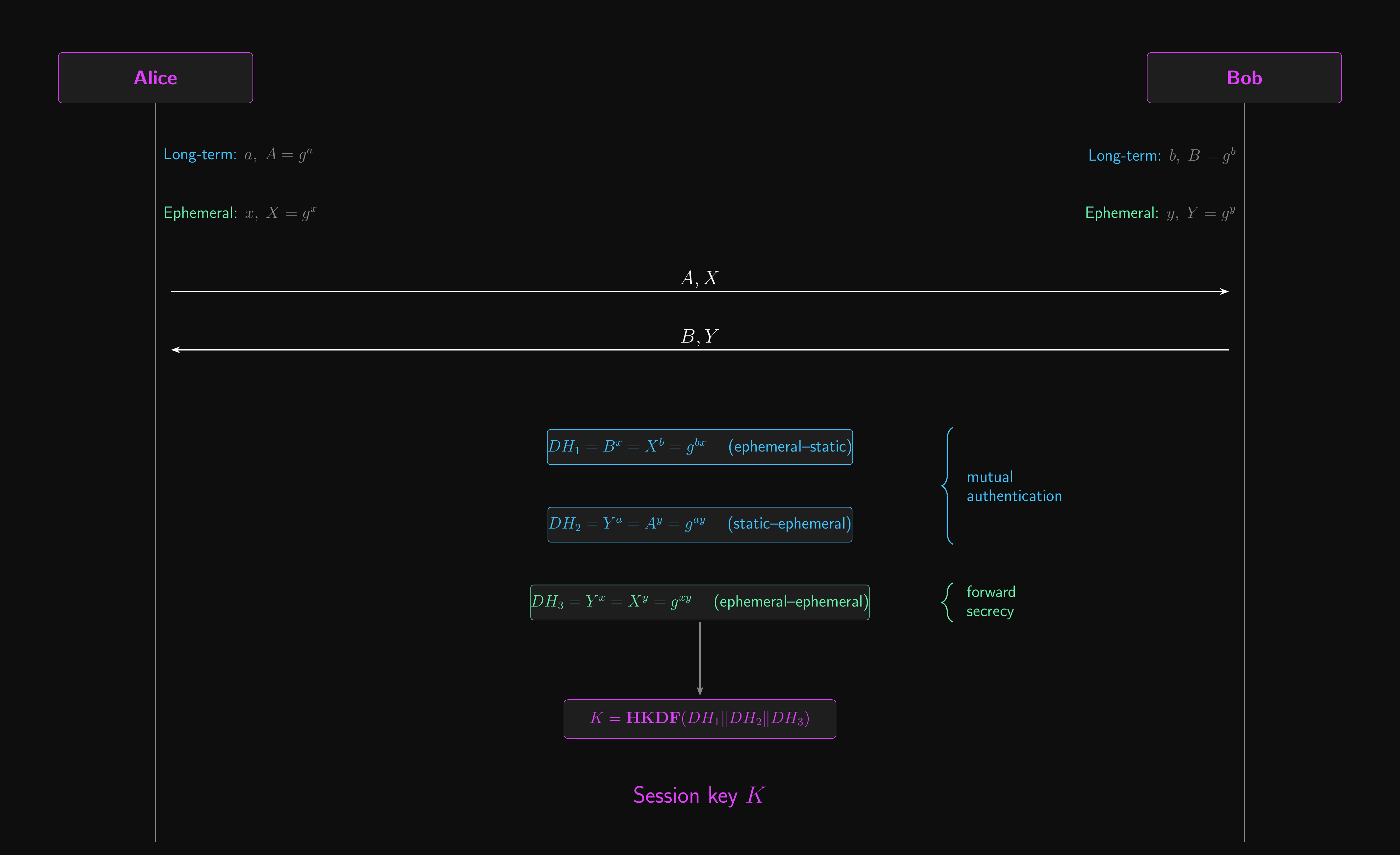

Triple Diffie-Hellman (3DH)

- Let’s assume that we’ve already agreed system parameters \(g\) and \(p\)

- Let’s use conventional notation this time, \(PUBLIC\) and \(private\)

- Long before we start, Alice and Bob generate long-term identity key pairs

- Alice generates \(A = g^a \bmod p\) and publishes her public key \(A\)

- Bob generates \(B = g^b \bmod p\) and publishes his public key \(B\)

- The public keys may be made available through some kind of PKI

- Their purpose is to prove that Alice and Bob are who they say they are.

- Alice and Bob generate fresh ephemeral key pairs for each session

- Alice generates \(X = g^x \bmod p\) and publishes her public key \(X\)

- Bob generates \(Y = g^y \bmod p\) and publishes his public key \(Y\)

- Their purpose is to provide forward secrecy

- Past sessions aren’t at risk if this session’s key is compromised.

Triple Diffie-Hellman: Session Key

- Three DH operations, each one combining a different mix of static and ephemeral keys

- \(DH_1 = g^{ay}\): Alice’s static \(a\) meets Bob’s ephemeral \(y\)

- \(DH_2 = g^{bx}\): Bob’s static \(b\) meets Alice’s ephemeral \(x\)

- \(DH_3 = g^{xy}\): pure ephemeral, both sides

- Each side uses only what it has

- Alice (knows \(a\), \(x\); received \(B\), \(Y\)): \(DH_1 = Y^a\), \(\;DH_2 = B^x\), \(\;DH_3 = Y^x\)

- Bob (knows \(b\), \(y\); received \(A\), \(X\)): \(DH_1 = A^y\), \(\;DH_2 = X^b\), \(\;DH_3 = X^y\)

- Final session key: \(K = \text{HKDF}(DH_1 \,\|\, DH_2 \,\|\, DH_3)\)

Triple Diffie-Hellman (3DH)

Triple Diffie-Hellman: Why It Works

- Where forward secrecy comes from

- \(DH_3\) depends only on \(x\) and \(y\), which get destroyed after the session

- Even if \(a\) and \(b\) leak later, \(DH_3\) can’t be reconstructed, so neither can past session keys

- Where authentication comes from

- \(DH_1\) and \(DH_2\) each bind a static identity key to the session

- Mallory can’t produce either without a stolen static key

- A long-term key compromise loses you future sessions, but not past ones. That’s the whole point.

- Signal’s X3DH extends this idea so the protocol works even when one party is offline. Coming up next.

X3DH: Extending 3DH to Asynchronous Messaging

- 3DH needs both parties online. Real messaging doesn’t.

- Bob is asleep, on a plane, or out of battery; Alice still wants to send a message now

- Signal’s X3DH (“Extended”) solves this by having Bob prepublish key material to a server

- A semi-static signed prekey (rotated weekly) plus a stockpile of one-time prekeys

- Alice fetches a bundle, runs four DH operations against Bob’s published keys plus her own ephemeral, and derives the session key with HKDF

- First encrypted message ships with Alice’s ephemeral and identity public keys in the header so Bob can replay the same DHs when he comes online

- Used by Signal, WhatsApp, Wire and most modern end-to-end-encrypted messengers

- After X3DH establishes the session key, Signal’s Double Ratchet takes over for per-message forward secrecy. We build X3DH and the Double Ratchet fully in Lecture 08.

Why TLS 1.3 Mandates Ephemeral Key Exchange

- TLS 1.3 removed support for static RSA and static DH key exchange

- Only ephemeral (EC)DH is allowed

- The reason: forward secrecy is not optional for modern protocols

- A compromised server key should not retroactively expose years of recorded traffic

- With static RSA, anyone who obtains the server’s private key can decrypt all past sessions

- With ephemeral DH, each session uses a fresh key pair that is destroyed after use

The Quantum Threat

Memento mori.

The Quantum Threat

- Forward secrecy defeats the classical harvest-now-decrypt-later threat

- Record today’s ciphertext, obtain the server’s key later, decrypt retroactively

- Ephemeral DH defeats this: there is no long-term key left to expose

- Shor’s algorithm reintroduces the threat through a different mechanism

- It solves both integer factorisation and discrete log in polynomial time

- RSA, finite-field DH, and elliptic curve DH all fall to a sufficiently large quantum computer

- Current quantum hardware is too small and noisy to reach real key sizes; engineering progress is continuous

- Recorded traffic today can be decrypted once quantum hardware matures

- Forward secrecy does not help: \(g^{\alpha\beta}\) can be recovered from \(g^\alpha\) and \(g^\beta\) via Shor’s algorithm

- NIST has standardised post-quantum alternatives

- ML-KEM (FIPS 203) for key encapsulation, ML-DSA (FIPS 204) for signatures

- We’ll return to post-quantum cryptography later in the module

Digital Signatures

MACs you can’t take back.

The Authentication Gap

- We’ve solved key exchange (DH, ECDH) and public-key encryption (PKE from TDF, KEM/DEM)

- But everything is anonymous: no authentication

- MACs provide message authentication, but require a shared secret key

- Any keyholder can produce a tag

- Bob can verify Alice’s tag, but he could have produced it himself

- Can we do message authentication using only public keys?

- Yes: digital signatures

Non-Repudiation

- Digital signatures provide a property MACs cannot: non-repudiation

- Only the signer (secret key holder) can produce a valid signature

- Anyone with the public key can verify it

- Once Alice signs, she cannot credibly deny it to a third party

- This is strictly stronger than MAC authentication

- Not always desirable: sometimes deniability is a feature, not a bug

- In law, electronic signature \(\neq\) cryptographic digital signature

Signatures in Practice

- Software distribution: Microsoft signs updates with its secret key

- Customers verify with the public key before installing

- No shared keys, no trusted third party for end users

- Authenticated email (DKIM): domains sign outgoing emails

- Public key published in a DNS record; recipients verify sender authenticity

- Certificates: a CA signs “this public key belongs to this domain”

- Your browser ships with the CA’s public key pre-installed

- Every HTTPS connection you make verifies a chain of signatures before any key exchange happens

Closing the MITM Loop

- Recall Attempt 2’s failure: Mallory substituted her own \(g^{\alpha'}\) because nothing bound \(g^\alpha\) to Alice

- Signatures fix exactly that gap! Every session

- Alice sends \(g^\alpha\) and a signature \(\sigma = \text{Sign}(sk_A, g^\alpha)\)

- Bob verifies \(\sigma\) using \(A\), Alice’s long-term public key

- If it verifies, that \(g^\alpha\) really came from whoever holds \(sk_A\)

- Where does Bob get \(A\) in the first place? From a certificate signed by a CA that Bob already trusts

- Trust chain: browser trusts a root CA, root CA signs an intermediate, intermediate signs Alice’s certificate

- Mallory can still relay messages, but she can’t forge Alice’s signature without \(sk_A\), so the MITM attack is blocked

- This is essentially the TLS 1.3 handshake in miniature! We’ll formalise signatures and build this out properly in L06.

Conclusion

What did we learn?

What Did We Learn?

- Solved the key distribution problem that every symmetric primitive left open

- Two paths: DH/ECDH (key agreement) and TDF/RSA (key transport)

- Public-key encryption from TDFs gives us our first IND-CPA-secure scheme

- Forward secrecy and 3DH protect past sessions from future key compromise

- Previewed digital signatures: closing the MITM gap that MACs can’t, formal treatment in L06

Where Do We Go from Here?

- Next lecture: digital signatures and CCA security

- The \((G, S, V)\) triple and UF-CMA security game (mirrors the MAC structure from L04)

- RSA-FDH, Schnorr and ECDSA constructions

- IND-CCA2 and RSA-OAEP, closing the loop on textbook RSA

- Then in L07: HKDF, password-based KDFs, the E-t-M / AEAD composition proof, and full EPIC prep

- And L08: HPKE (RFC 9180) as the capstone construction

Questions?

Ask now, catch me after class, or email eoin@eoin.ai